"Curiosity killed the cat" – said no one in reference to customer feedback, ever.

While the old English saying is true about how asking too many questions can turn ugly in certain areas of life, being inquisitive about your audience is key to an effective customer experience strategy.

Still, it’s important to be equally attentive to trends and changes in audience behavior, as it is to determine a cohesive survey design approach

So, how do you, as a marketer or user researcher, find the golden mean between structure and constant adjustment?

I’m going to show you how understanding the fundamentals of survey methodology can boost the quality and relevance of your feedback collection strategy.

You’ll learn how to set your survey efforts up for success with the right tools, and types of insights you’re looking to derive.

Regardless of whether you’re new to survey design – or already have some experience, but never got around to catching up on the theoretical basics – you’ve come to the right place.

All set? Let’s begin!

What You Need to Know About Survey Methodology

If you search for the term “survey methodology” online, you’ll see it’s used interchangeably to describe all sorts of survey tools, questionnaire construction methods, or feedback collection hacks.

In fact, they all somewhat fall into the definition.

According to UCLA Labor Center, “survey methodology is the study of survey methods and the sources of error in surveys”.

Errors are all the factors that deviate your survey efforts from the desired outcome. Survey methodology studies aim at minimizing their occurrence in the future.

Now, depending on your previous experience with feedback collection, the word “survey” may bring various pictures to mind.

For some, it’s that evening call from the national opinion center prior to presidential elections. Others are more likely to think of the occasional website pop-up on their favorite online store, or live polls carried out in the street.

These are all examples of various survey instruments. But before we dive in, let’s think about the very core of all feedback collection:

Cause.

Why do you want to survey your audience?

Are you planning to collect feedback as a reactive measure to a single event, or do you want to analyze results for the same question across various points in time?

Are you looking for structured responses that can be quickly analyzed with a data analysis tool, or do you want descriptive answers to open-ended questions?

All these questions, among others, need to be taken into account if you want to optimize your survey design efforts.

So, let’s take a look at the pros and cons of various survey tools, and consider how some of the most popular customer satisfaction metrics can serve your goals.

Survey Instrumentation

At the highest level, surveys can be divided into two groups: questionnaires and interviews.

In questionnaires, it’s the participant, whereas, during interviews, it’s the researcher who notes down not necessarily answers, per se, but key takeaways.

Now, at first glance, you might contemplate drawing a line between the two – especially if you’ve come to this post with a plan to collect feedback through questionnaires only.

Potentially, interviews may seem like too much of an elaborate research method for your current needs. And, chances are, your gut feeling is just right.

Still, the thing is, this might change quickly.

As you derive more and more information about your respondents, there may come a day longer conversations will make a lot of sense – and a world of a difference for your product development plan. And, when the time is right, you’ll know exactly what tool to employ to expand your feedback collection efforts.

Another great thing?

Interviews and questionnaires are pretty much a marriage made in heaven. While they’re doing quite well solo, combined, they’re a whole other dimension of powerful feedback. One method can accelerate the efforts of the other – interviews can complement what you’ve already learned from questionnaires, and questionnaires can pre-qualify the right people for interviews. Pretty great, right?

So, let’s take a look at the ways you can ace the feedback collection game.

Questionnaires

Website questionnaire

In a digitalized world like ours, no one needs to be convinced of the incredible potential of website feedback.

These surveys take on various forms – from large pop-ups in the middle of the screen, to discrete widgets on the edge of the browser window.

Here’s a general breakdown of what you can expect from website questionnaires:

Pros:

- With the right tool, you can target an audience as broad or narrow as you like

- The fastest way to collect crucial feedback from your website crowd

- A highly effective way to measures customer satisfaction metrics such as NPS (Net Promoter Score).

Cons:

- If overused, you can exhaust your respondents’ attention or patience and cause survey fatigue

- Without prior knowledge on your audience, you can target users inaccurately and receive unactionable feedback

- It may be difficult to receive answers to open-ended questions if you haven’t previously proven your respondents that their insights and time are valued.

Now, here’s the good news:

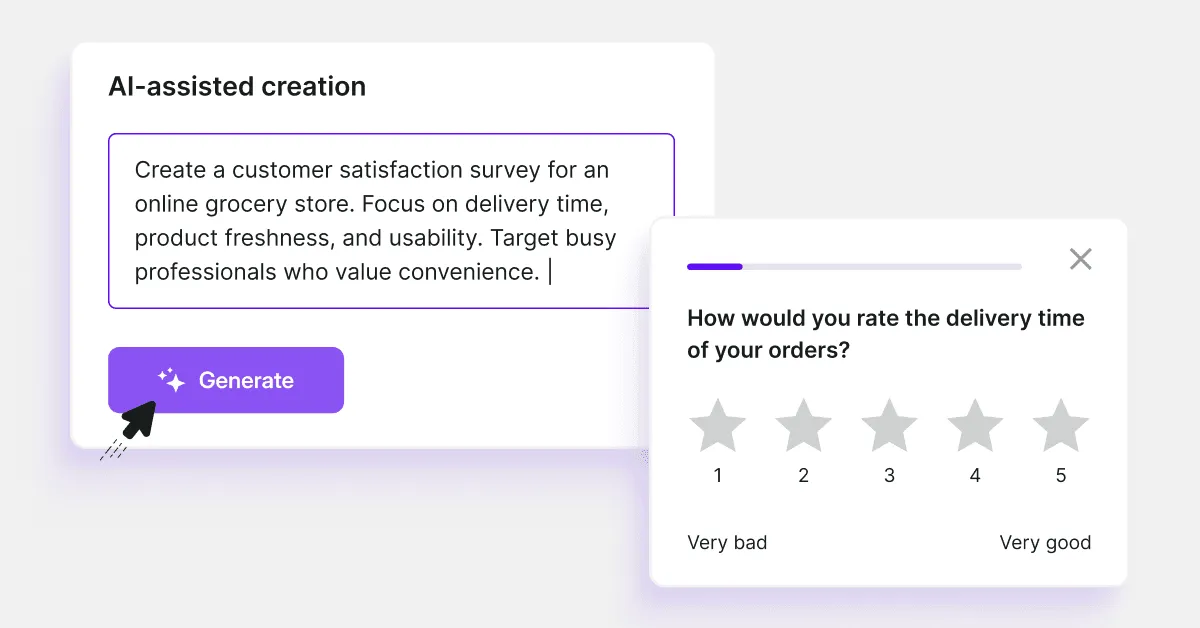

Using the best survey tools, you can make sure you don’t experience the downsides described above.

With Survicate, you can target relevant respondents right from the beginning, as you can easily import data you already have on your audience from external marketing and CRM tools.

Depending on your preference, you can also choose to run website questionnaires through your favorite customer communication tools. This approach, loved by many marketers, allows you to use the same tool for all audience communication, as well as cross-analyze and export survey responses within seconds.

Let’s proceed to another type of digital survey…

Mobile app questionnaire

These surveys run inside mobile apps, and are both relatively new and specific in terms of the beneficiary in mind.

Similar to website questionnaires, mobile app surveys are displayed to users who interact with the product/service.

Pros:

- Ideal for companies that have mobile apps and want to know how users find their way around/what they think about the service

- You can reach users “on the go” – ideal for single-question surveys that are quick to answer

- You can target users contextually and ask highly relevant questions.

Potential cons:

- The threat of being quickly discarded, if too many steps or open-ended answers are required (i.e. inconvenience of typing on a small screen)

- If a questionnaire covers the entire screen or appears too often, there’s the risk of coming across as obtrusive.

Let’s carry on to the last online questionnaire on the list...

Email questionnaire

Similarly to website questionnaires, emails are one of the fastest ways to reach your audience. Assuming you’re using emails provided by your audience (which really is the only way to go), you can count on incredibly valuable feedback from users who have expressed interest in your service/product.

That is, of course, if you approach email surveys strategically.

Pros:

- Similarly to website questionnaires, you can target specific segments of your email list.

- A quick way to reach an engaged audience – assuming emails have been provided to you willingly (for ex. newsletter list), your respondents are likely to be more engaged than those who only sporadically visit your page

- Effective for sending targeted customer satisfaction surveys

Potential cons:

- Weak email subject lines can set you up for low response rates. Make sure to stand out!

- If you send out all your questionnaires to the same audience (i.e. no segmentation), you might disturb respondents who are sensitive about spam (which, of course, your emails are not).

Want to hear a pro tip?

It has been proven that embedding only the first question in an email, and displaying remaining questions in a new window (as opposed to emailing a full questionnaire) boosts survey completion rates.

Sounds like something you’d like to try? You can do so on a free Survicate account!

And here's what the same survey might look like when opened in a new window:

Now, let’s put digital questionnaires aside and take a look at some other options.

Traditional mail questionnaire

While it may come as a surprise, many survey methodology resources online report that mail surveys are the most popular feedback collection method.

Whether they’ve been dethroned by digital questionnaires remains unclear, though it certainly seems inevitable, given all the convenience online questionnaires bring.

Still, as of today, paper questionnaires are still appreciated in certain lines of business.

Pros:

- You can collect feedback from clients who did not provide an address or phone number

- Respondents can fill the survey in when it’s most convenient

- Certain groups of respondents may trust a traditionally mailed survey more than an email.

Potential Cons:

- Feedback is collected slowly, and response collection can take months

- A lot of paperwork – responses need to be handled and scanned individually if any modern-day analysis is to be performed.

Telephone questionnaire

Not as common as they were back in the day, but still a favorite of many companies that rely hugely on sales and customer service teams.

Pros:

- Response rates are high if the respondent actually speaks to a human.

Potential cons:

- Response rates can be very low if the call is automated

- May come across as obtrusive if calls are initiated too often or at the wrong time

- Since the introduction of GDPR, and after the famous Cambridge Analytica scandal, respondents have become reluctant to reveal information on the phone.

In-person questionnaire

These used to perform much better decades back before online surveying methods emerged. Nowadays, face-to-face questionnaires have mostly taken the form of meetings via video conferencing software.

As they’re quite time-consuming, today the approach is mostly carried out among random respondents (i.e. on the street), at an incentive to the respondent, or among very specific, carefully selected members of the audience (which, in its own right, might require previous pre-qualifying questionnaires).

Pros:

- Good for modestly-sized feedback collection goals

- If carried out on the street, it provides a good overview among random respondents

- People tend to be more invested in questionnaires in person, and might be more prone to respond to open-ended questions.

Potential cons:

- Time-consuming, both for the respondent and researcher

- In-person questionnaires with carefully targeted groups often require running pre-qualification surveys weeks ahead.

Which leads us to...Interviews

Interviews

According to Geneva Foundation for Medical Education and Research, there are two main criteria to think of when we distinguish interviews. These are:

Interview structure

- Structured (question order is predetermined, researchers only go off script to clarify the question or ask for more details)

- Semi-structured (questions are predetermined, but the researcher is at liberty to use informal language and tweak wording)

- In-depth (questions aren’t predetermined, the conversation relies mostly on one’s subjective point of view/opinion).

Number of participants

- Individual interviews

In-person interviews: these will be invaluable if you’re looking to read between the lines – especially if you adopt an in-depth or semi-structured approach. In the same way as in the case of in-person questionnaires, respondents are more prone to answer more precisely when addressed face-to-face.

Phone interviews: Similarly to phone questionnaires, the response rates are high – even more so, as interviews can’t (at least, as of yet) be effectively carried out without a fellow human researcher on board. There is a significant difference though – while phone questionnaires remain on script, interviews, with their more open nature, encourage sharing opinions and, as an effect, often collect insights of highly subjective, emotional nature.

- Focus groups

While these aren’t necessarily interchangeable with other surveys that made the list, it’s worth noting nonetheless. Focus groups are a way to trigger discussion on a given subject among several participants. It is an amazing way to uncover your audience’s attitudes, as it not only promotes openness but also shows how diversified opinions evolve throughout a live discussion.Now that you know all the survey instrumentation options, the question is:

What are some telltale signs you’d benefit from a mixed approach?

Here are a couple of examples:

- You’ve been uncovering recurring behavior within a group, that can’t be explained through correlating other behavioral or attitudinal data.

- Your respondents are less prone to answering open-ended questions than they have before and leave more and more empty fields.

- Your respondents answer open-ended questions, but the responses are less insightful than you’ve imagined. Also, you don’t know how to formulate a questionnaire that triggers a detailed response anymore.

- There’s a very specific user group you’re increasingly interested in hearing more from – ideally, in a less structured, individual way.

- You’ve been measuring specific customer metrics via surveys (for example, NPS survey or CSAT survey, as explained below) but the results have been introducing confusion. You don’t really know what your respondents think anymore – or, more importantly, what they’re actually evaluating.

If you're looking to start surveying your user base, sign up for Survicate's 10-day free trial and get access to all of the Best plan features today.

.avif)

.svg)

.svg)