The pessimism is thick in our Slack channels and conference hallways. I've felt it too, the doubt, second-guessing every report I write, wondering if a prompt could do it faster and cheaper. It's exhausting, but it's also human.

Yet here's what I've come to realize after months of wrestling with it: the fear isn't really about AI replacing us. It's about change hitting faster than we're ready for, and the stories we tell ourselves in the dark.

In this article, I want to share what I've learned as a UX researcher who's been worried, experimented, and come out the other side more certain: AI is reshaping how we work, not erasing why we matter.

Can UX researchers pretend AI won’t change their work?

I brought this question to my colleague Weronika Denisiewicz (Senior Product Researcher), to talk it through and hear her take.

We both see the toughest road ahead for entry-level roles. If AI starts handling intern- or junior-level tasks like basic analysis or initial synthesis, breaking into the field could become even harder than it already is.

However, the outlook feels more solid for those of us with experience, as long as we stay proactive and keep up with AI’s capabilities.

The bottom line we’ve both reached is this: if we want to feel secure, even the most experienced among us must befriend AI. Otherwise, we risk falling behind our peers who pair their human expertise with tech.

What AI can and cannot do well – a UX researcher’s perspective

I've spent enough hours staring at AI-generated summaries, affinity maps, and "synthesized" user quotes to know this truth in my bones. AI is incredibly capable... and profoundly limited in the ways that matter most to our craft.

Recently I came across a LinkedIn post from Krzysztof Miotk, a well-known expert in the UX space, who said it bluntly:

If someone tells you AI is replacing UX research, don’t ever hire them to run yours.

His point isn’t that AI is useless – it’s actually quite good, but good in the way a very capable intern is good. If a practitioner truly believes it’s fully taking over thoughtful research work, it usually means they haven’t yet developed the eye to catch every hallucination, every oversimplified insight, every missed emotional cue or cultural subtlety.

As UX researchers we need to know what AI can do well and what it fails at.

What AI can do well

I’ve cross-referenced the tasks I use AI for in my own daily workflow with what other UX researchers report using in the User Interviews AI in UX Research Report. This lets you quickly see how much of what your peers are doing is already part of (or could easily become part of) your own work.

1. Transcription, cleaning, and summarizing at scale.

AI can transform hours of audio or video interviews into clean, searchable text in just minutes. This is hands-down the no. 1 task AI has completely removed from my plate. I’m so grateful for it that I’ll say it straight: I genuinely can’t imagine going back to working without this capability. Also, around three-quarters of UX researchers are already relying on it for transcription and summarization. So, if you haven’t jumped in yet, you’ve got some catching up to do.

2. Data tagging & categorization.

Research repository tools can auto-label quotes, segments, or responses with themes, sentiments, or codes. You don’t have to manually tag bits or user interviews or surveys with colors, which is perfect if you’re working on larger data samples or multi-source information.

3. Pattern detection at scale.

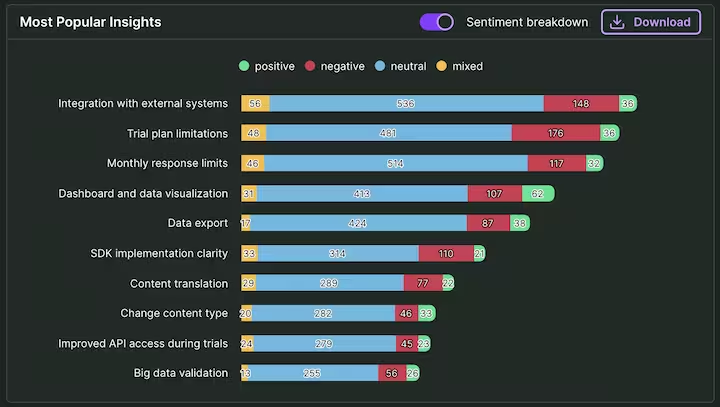

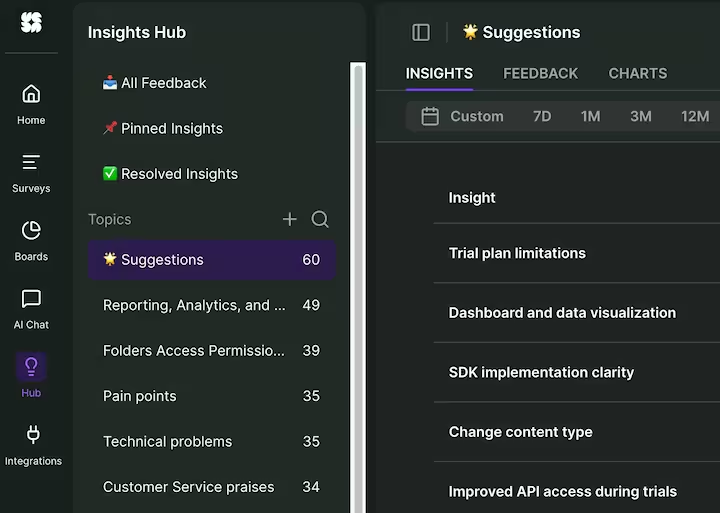

Since AI analyzes the qualitative and quantitative data you upload, it can also spot recurring themes, connections, and outliers. The User Interviews AI in UX Research Report says that about 52% already use AI for this. Personally, I use Insights Hub for this purpose. It analyzes data from multiple sources, pairing same-topic insights into categories for an easy overview. I can also ask a feedback-based chatbot to find a specific piece or information for me, like a quote from an interview I held in Q3 last year. It lets me spot even the most subtle trends that could have slipped past manual reviews.

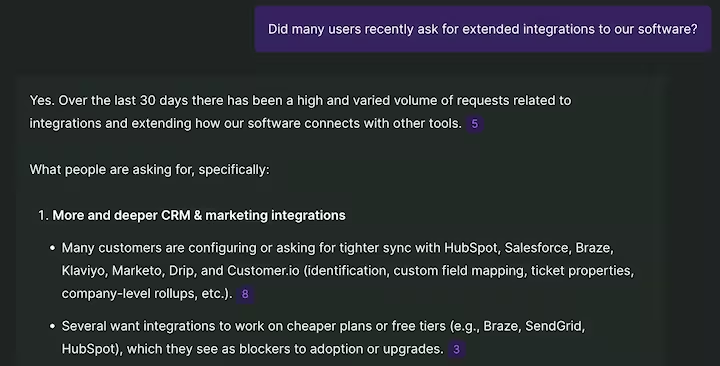

4. Asking AI the fundamental "what happened?" questions.

You can think of AI like a colleague or a sparring partner, depending on what you currently need. I feel that this matters, because many of us UX researchers are one-person teams. I like bouncing ideas off it, like checking if a script aligns with goals, generating first versions, or exploring early patterns. Of course, "analyze the data and write the report" still requires my judgment to land right.

What AI cannot do well (yet)

According to the findings from the same report, AI outputs cannot be taken at face value. They demand rigorous human vetting every time, because the issues are persistent and meaningful. Here are the biggest gaps that keep showing up:

- Strategizing. AI can neatly summarize what users said, but it struggles to connect those patterns to what actually matters for the business or product. It lacks the deep organizational context – stakeholder priorities, past decisions, resource realities – that lets us weigh trade-offs, craft persuasive stories, and land on recommendations that stick. We remain the bridge from "here's what we heard" to "here's what we should do about it."

- Scoping and goal-setting. Sure, 55% of us use AI to draft plans or objectives in the design phase, but without tight prompting and heavy review, the outputs often feel generic, or misaligned. AI can't independently nail precise scope, define meaningful success criteria, assess insight quality, or factor in real-world limits like budget, timeline, or risk. Human expertise keeps the work focused and credible, otherwise, it's easy to wander into scope creep or dead-end tangents.

- Creativity. AI excels at remixing familiar patterns and generating variations on what's already known, but it rarely delivers those genuine "aha" breakthroughs or emergent ideas that redefine the problem. Outputs can come across as unoriginal or vague until a human steps in to refine, and connect unexpected dots.

- Ethics. AI has no independent compass for detecting subtle biases in data or its own outputs. Human oversight is necessary to preserve rigor and accountability, protecting both users and the integrity of our work.

- Human perception. In both analysis and moderation, AI fails to comprehend nuances and contradictions or take the whole context into account. What happened during the interview, how did the respondent behave, what was its body language and tone of voice like?

These aren't minor flaws; they're core to why great research feels human. The report doesn't paint AI as useless – far from it actually – but it reminds us that the tool's real power shows when we stay firmly in the driver's seat, applying judgment and ethical awareness no model can replicate (yet).

How do we start using AI in a way that’s actually meaningful?

Whether you’re enthusiastic about AI or not, the fact is it’s here to stay. Sooner or later, we’ll all have to apply it in our work, so here’s my and Weronika’s advice on how to start using it to your advantage.

Partner with AI to deliver value faster

As UX researchers, we've all felt that knot in our stomach when someone says, "Can't AI just do this?" You might think we fear the tool, we don’t, it’s the non-research folks using AI we’re most concerned about. They lack the methodological grounding to spot flaws, and then trust shaky outputs that lead to flawed decisions down the line.

"People still see traditional research as too slow or expensive, so they're tempted to lean on AI harder. At first glance, it might look like a quick win – you know fast summaries, neat themes – but without oversight, it risks poor insights and costly business missteps."

We can flip this narrative by showing up as accelerators, not gatekeepers who slow things down. Demonstrate quick wins right away. How?

Here's one practical tip from me: run a side-by-side comparison. Take a behavioral method like usability testing – create one report from a researcher using AI as an assistant, and another from AI alone. The differences jump out, especially in bridging what users do versus what they say. AI might miss subtle frustrations, workaround behaviors, or emotional cues that scream "this design fails in the real world." Showing that gap visually or in a quick demo will make the human edge undeniable.

Take the lead and own the business impact

We do the hard work of uncovering insights, then... hand them off. We inform, but too often stop short of driving action. That's where we lose sight of real business value and it's a pattern I've seen time and again.

"How to get closer to the business" is one of the most repeated questions in rooms full of researchers. It's rarely part of our formal education or early training, so we show up strong on methods and empathy, but unsure how to translate findings into strategic moves that stakeholders actually run with.

In the AI age, we can't differentiate just on analysis anymore – especially not on the kind of simple analysis AI can now generate instantly. To stand out and thrive, we shift toward leadership:

- Judge what's strategically important

- Move beyond hand-offs

- Align stakeholders around evidence

- Build lasting assets

By stepping up as strategic partners, we move beyond just reporting data to actually shaping the business. That’s a role no AI can easily replicate. This shift – from researcher to change agent – is a serious leap that demands new muscles: project management, business analytics, and leadership training. It’s a different job entirely, and it’s where our future lies

Use AI tools that take in their unique data and business context

Are you still relying solely on a general-purpose AI (like ChatGPT, Claude, and others) in your work? These tools can be great for validating market assumptions, spotting trends, or analyzing competitor reviews at scale. But when it’s time to make recommendations for your product, like feature priorities, interface tweaks, or broader strategic choices? Well, that’s where the party ends.

These models pull from everywhere, so they might give you insights that aren’t grounded, and you can’t really verify their accuracy. They don’t transparently show how they’ve used your data to arrive at a specific conclusion. To prove your value, you need AI locked to your data only.

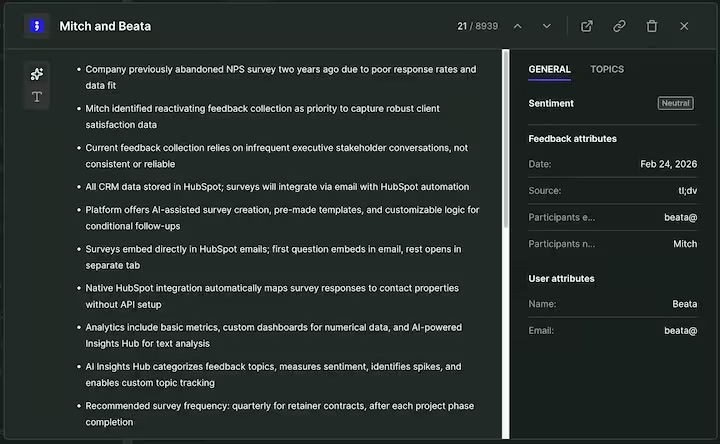

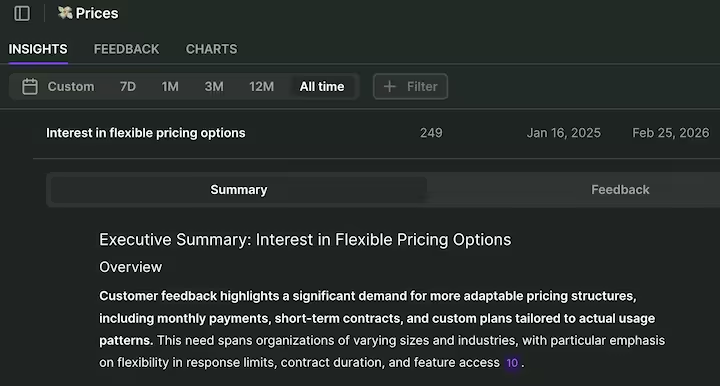

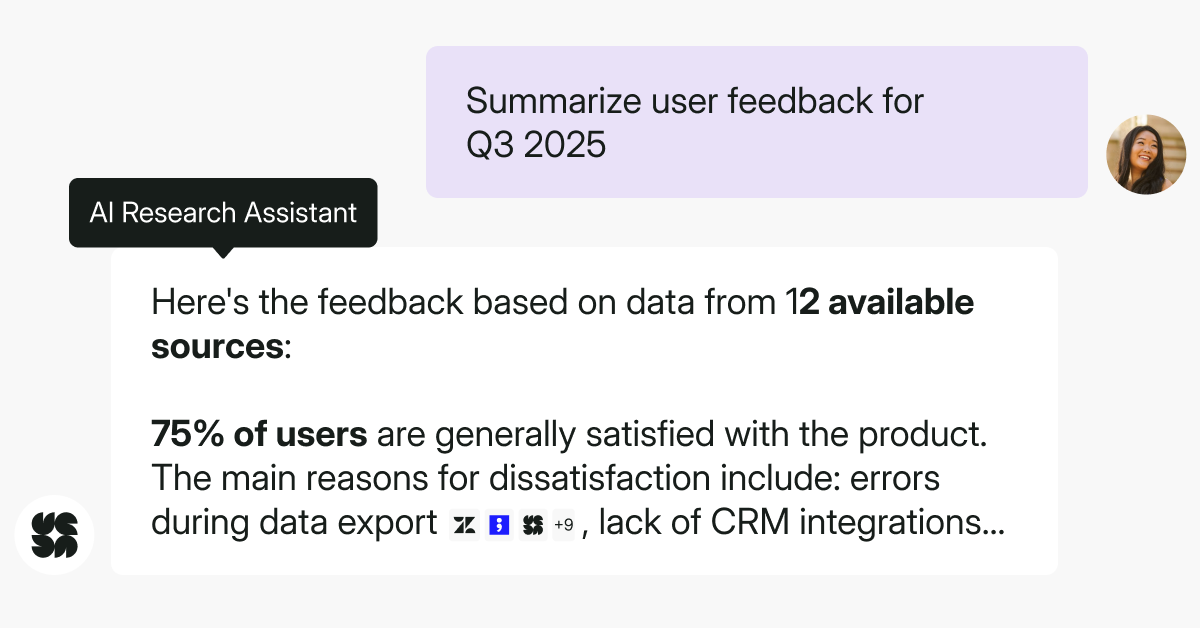

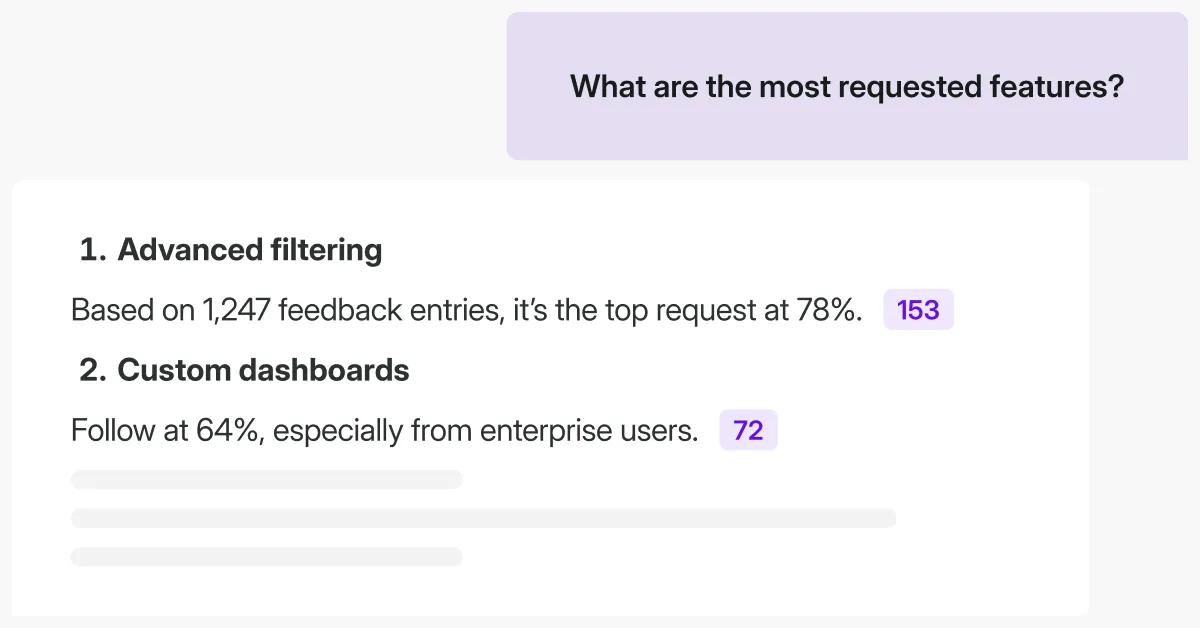

Insights Hub can do that for you. The platform can connect directly to +40 native integrations, including HubSpot, Salesforce, Braze, Zendesk, and Slack. Data is then pulled in, categorized, and analyzed automatically in real-time.

You can also upload data freely from 3rd party sources, i.e., your surveys, transcripts, or CSVs, and filter insights by source.

Let’s say that you’ve noticed an uptick of flexible pricing requests, and that it started affecting your company’s CSAT scores.

Everyone’s wondering – why, and why now? Is a competitor entering the market on the same price tier? Or has your customer mix shifted to more temporary or budget-sensitive users? If it’s the second, that’s a product-specific insight you won’t be able to discover using generic AI. But you can do so using your internal AI tools.

In Insights Hub, you can cluster related comments from your own data, and then maybe cross-check broader market trends if needed. Notice how this kind of insight could rewrite product roadmaps, marketing playbooks, or introduce new sales personas.

Lead the conversation on user insights and bring them directly to the stakeholders who matter. When you transition from 'data provider' to 'strategic advisor,' your AI anxieties will naturally fade.

Don’t worry about AI – work alongside it

If you asked me a few years ago, I would have described my approach towards AI as "reluctant acceptance" (to my defense, who didn't feel that way back when AI tools couldn't even access the internet, right?). But actually, after months of reflecting on AI limits and using it as a resourceful assistant in my work, I’ve come out the other side genuinely excited.

Through that process, I finally realized what got everyone worried in the first place. It wasn’t AI itself, but that change was moving faster than our confidence could catch up. As this overlapped with layoffs in the tech industry, we told ourselves stories that made the AI revolution feel bigger and darker than it really is.

Will UX research be replaced by AI? If you are carrying that worry right now, I want you to hear my take loud and clear.

AI is not wiping away user research work for humans. It’s just reimagining how we must handle our daily work to stay relevant. AI can take on the repetitive load that drains time and focus. It gives back space for judgment, interpretation, and the kind of thinking that is distinctly human.

I believe there will never be a stronger combination than human judgment working alongside AI, each doing what the other isn’t as good (or fast) at. So, be bold, and claim that ground for your work.

.svg)

.svg)